AI and Data Privacy in Workplace Productivity Monitoring

Learn how AI-powered workplace productivity monitoring affects employee data privacy, compliance, transparency, accountability, and trust in organizations.

AI is changing workplace productivity monitoring, but it’s also raising serious questions about employee privacy.

Modern AI tools can track productivity, detect burnout risks, and analyze work patterns in real time. While these features improve efficiency, they also create growing concerns about how employee data is collected and used.

With stricter laws like the EU AI Act and GDPR, businesses must now balance productivity, privacy, and compliance. In this blog, I’ll explain the biggest AI monitoring trends, privacy risks, and best practices for responsible use.

How AI Changed Productivity Monitoring

AI has completely changed how workplace productivity monitoring works. Instead of simply collecting activity data for later review, modern tools now analyze it in real time and automatically identify patterns.

AI-powered systems can predict burnout risk, measure productivity trends, and even analyze sentiment in communication through messages, audio, and video. Productivity monitoring is no longer just about tracking activity, it’s about using data to make faster and smarter decisions.

| Capability | Before AI | With AI |

|---|---|---|

| Activity classification | Manual rules, role-by-role | Automatic, learns each user's baseline |

| Productivity scoring | Aggregate hours and output | Role-based scoring with anomaly detection |

| Burnout signals | After-the-fact reporting | Predictive flags from focus-time drift and after-hours patterns |

| Screen capture | Periodic screenshots only | OCR + content classification on captures |

| Workload imbalance | Manual analysis | Team-level patterns automatically |

| Coaching prompts | Manager-driven | AI-suggested talking points based on data |

The key issue is not whether AI is good or bad. It’s that modern monitoring tools now collect more data, make smarter predictions, and influence workplace decisions faster than before. That shift is exactly why employers are paying closer attention.

Key AI & Data Privacy Laws in 2026

AI-powered workplace monitoring tools are becoming more advanced, but regulations around employee data and AI usage are also becoming stricter. Businesses using these tools must understand the major privacy and compliance laws that apply in 2026.

1. EU AI Act

The EU AI Act applies to companies operating in the EU or providing AI systems that affect EU residents. The law bans certain AI practices, including emotion recognition in workplaces, except in limited safety-related situations. Many AI-based monitoring systems fall under the 'high-risk' category, requiring stricter transparency, oversight, and data governance measures.

- Prohibited AI practices apply from February 2025

- High-risk AI obligations apply from August 2026

- Maximum penalties: Up to €35 million or 7% of global annual turnover

2. GDPR

The General Data Protection Regulation (GDPR) requires organizations to have a lawful basis for collecting and processing employee data. This includes AI-generated insights and behavioral analysis. Companies must also ensure transparency, data minimization, employee rights protection, and Data Protection Impact Assessments (DPIAs) for large-scale or systematic monitoring.

- In force since: 2018

- Maximum penalties: Up to €20 million or 4% of global annual turnover

Several major enforcement actions have already targeted workplace monitoring practices, including fines against Amazon France Logistique and H&M Germany for excessive employee data collection and monitoring.

3. US State Privacy Laws

Workplace monitoring laws in the United States vary by state. States such as Connecticut, Delaware, and New York require employers to provide notice before electronic monitoring begins.

Illinois’ Biometric Information Privacy Act (BIPA) is especially important for AI monitoring tools that use facial recognition, voice analysis, or biometric tracking, as it requires informed consent before collecting biometric data. States like California and Colorado also impose rules around sensitive personal data and automated profiling.

4. UK GDPR & Data Protection Act 2018

The UK follows a GDPR-style framework through the UK GDPR and the Data Protection Act 2018. The UK Information Commissioner’s Office (ICO) has also issued workplace monitoring guidance that addresses AI-driven monitoring, employee transparency, and restrictions around covert surveillance.

- Maximum penalties: Up to £17.5 million or 4% of global annual turnover

5. Asia-Pacific Privacy Laws

Countries across the Asia-Pacific region are strengthening privacy laws related to employee data and AI processing. India’s Digital Personal Data Protection Act (DPDPA), Singapore’s PDPA, and Australia’s Privacy Act all include consent, transparency, and purpose-limitation requirements that affect workplace monitoring practices.

While these laws are not yet as broad as the EU AI Act, several countries are actively developing AI-specific regulations expected to expand between 2026 and 2027.

Organizations using AI monitoring tools should focus not only on productivity and automation, but also on transparency, fairness, employee trust, and responsible data handling. Strong compliance practices are becoming a core part of ethical workplace monitoring.

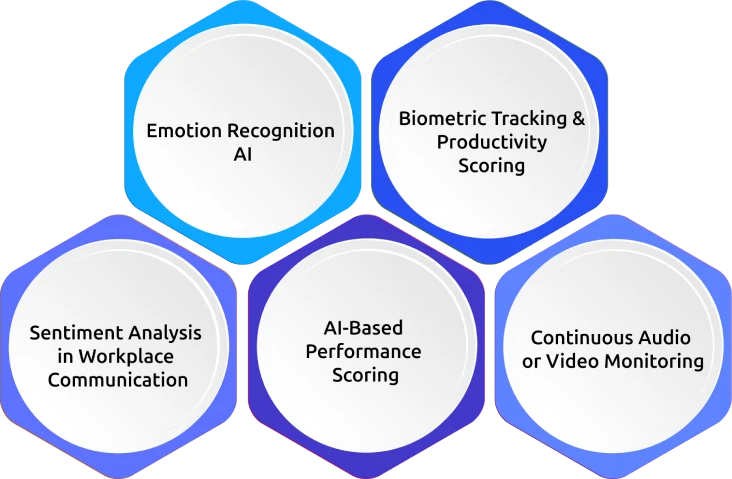

High-Risk AI Features in Workplace Monitoring

Not all AI monitoring features carry the same level of privacy risk. Some features raise serious legal, ethical, and employee trust concerns, especially under employee privacy laws.

1. Emotion Recognition AI

AI systems that detect emotions through facial expressions, voice patterns, or behavioral signals are considered extremely high risk. The EU AI Act bans emotion recognition in workplaces except for limited safety-related use cases.

2. Biometric Tracking & Productivity Scoring

Features such as facial recognition logins, fingerprint tracking, and voice-based authentication involve sensitive biometric data. Laws like Illinois BIPA and GDPR require strict consent and data protection measures before using these systems.

3. Sentiment Analysis in Workplace Communication

Some AI tools analyze employee emails, chats, or messages to measure mood, engagement, or behavior patterns. While technically possible, these features can quickly create privacy concerns and reduce employee trust if used without transparency and clear limitations.

4. AI-Based Performance Scoring

AI-generated productivity or performance scores used for promotions, compensation, hiring, or termination decisions carry major compliance risks. Under GDPR, employees have the right to request human review of important automated decisions.

5. Continuous Audio or Video Monitoring

Continuous microphone or camera monitoring is considered highly sensitive in most privacy frameworks. Even when vendors promise anonymized data, continuous monitoring can still raise serious privacy, compliance, and ethical concerns.

Before deploying any AI monitoring platform, you have to carefully review how these features collect data, make decisions, and impact employee privacy.

Safer AI Features for Workplace Monitoring

Not every AI feature creates major privacy risks. Many AI-powered productivity tools can be used responsibly when they focus on improving workflows rather than deeply monitoring individual behavior.

1. Activity Pattern Detection

AI can identify unusual changes in work patterns without trying to predict emotions or personal intent. When based on lawfully collected data, this approach carries lower risk.

2. Role-Based App Classification

Some tools automatically classify apps and websites based on job roles. For example, a design tool may be marked productive for designers but not for finance teams. This type of rule-based automation carries relatively low privacy risk.

3. Team-Level Productivity Insights

AI-generated insights focused on team productivity, meeting load, or focus time are safer because they rely on aggregated data instead of detailed individual tracking.

4. AI-Assisted Coaching Suggestions

AI can support you by highlighting workload trends or productivity patterns without making decisions itself. Human review and monitoring remain important in these cases.

5. Team Burnout & Capacity Forecasting

Predicting workload pressure or staffing needs at the team level is less invasive than scoring individual employees. These insights can help you improve planning while reducing privacy concerns.

The safest AI monitoring features usually follow three principles: collecting only necessary data, using aggregated insights where possible, and keeping humans involved in important decisions.

How to Evaluate an AI Monitoring Vendor

Before choosing an AI-powered workplace productivity monitoring platform, be careful while reviewing the vendor’s privacy, security, and compliance practices. Asking the right questions early helps you prevent legal, operational, and employee trust issues down the line.

Key Questions to Ask Vendors

- Which AI features are enabled by default, especially for EU employees?

- Where is employee data stored, processed, and transferred?

- Does the platform classify any AI systems as “high-risk” under the EU AI Act?

- How are AI-generated insights reviewed before influencing workplace decisions?

- Does the vendor provide DPIA support or privacy assessment documentation?

- What are the data retention policies for monitoring and AI training data?

- Is there an audit trail for AI-generated alerts, decisions, or recommendations?

- Which compliance certifications are currently maintained?

You have to verify standards such as GDPR compliance, ISO 27001, SOC 2, HIPAA, or other industry-specific certifications where applicable.

A strong vendor will provide transparency around how AI works, how employee data is protected, and how automated insights are reviewed.

How to Build an AI & Privacy Policy for Workplace Monitoring

A strong AI monitoring policy will clearly explain how employee data is collected, used, and protected. To stay compliant and build employee trust, you have to focus on three key areas.

1. Create an AI Feature Inventory

Start by listing every AI feature used in the monitoring system. Document what data each feature collects, what insights it generates, and whether it influences workplace decisions. Make sure to regularly review the features to keep them accurate and updated.

2. Define Purpose & Legal Basis

Make sure each AI feature has a clear business purpose and a valid legal basis for processing employee data. You have to be capable of explaining why the monitoring is necessary and proportionate to the business need. This step is especially important for GDPR compliance and DPIAs.

3. Maintain Transparency with Employees

Make sure that the employees clearly understand what is being monitored, how AI analyzes the data, and how the information may affect workplace decisions. Explain the employee rights, including access, correction, and review options. Keep transparency notices simple, easy to understand, and updated regularly.

How Time Champ Supports AI Monitoring & Employee Privacy

Time Champ is an employee monitoring software with a workforce intelligence layer and privacy-focused workforce management features. The platform improves productivity without depending on overly intrusive monitoring practices.

Features such as time tracking, productivity analysis, app and website usage tracking, attendance monitoring, task management, and workforce analytics give you better visibility into work patterns and team performance.

To support privacy and compliance requirements, Time Champ offers configurable monitoring controls, customizable screenshot settings, role-based access, and activity filtering options. You can adjust monitoring levels based on operational and legal requirements. The platform also supports major compliance standards, including GDPR, ISO 27001:2022, HIPAA, and SOC 2 Type I.

Time Champ is designed to provide meaningful productivity insights that improve efficiency, accountability, and overall workforce management.

Ready to Improve Your Team's Productivity Without Compromising Employee Privacy?

Conclusion

AI is making workplace productivity monitoring smarter and more data-driven, but it also raises important privacy and compliance concerns. Balance productivity insights with employee trust, transparency, and evolving regulations. Using ethical practices and privacy-focused monitoring tools helps improve performance while maintaining a fair, transparent, and compliant work environment.

Table of Content

How AI Changed Productivity Monitoring

How AI Changed Productivity Monitoring Key AI & Data Privacy Laws in 2026

Key AI & Data Privacy Laws in 2026 High-Risk AI Features in Workplace Monitoring

High-Risk AI Features in Workplace Monitoring Safer AI Features for Workplace Monitoring

Safer AI Features for Workplace Monitoring How to Evaluate an AI Monitoring Vendor

How to Evaluate an AI Monitoring Vendor How to Build an AI & Privacy Policy for Workplace Monitoring

How to Build an AI & Privacy Policy for Workplace Monitoring How Time Champ Supports AI Monitoring & Employee Privacy

How Time Champ Supports AI Monitoring & Employee Privacy Conclusion

Conclusion

Related Blogs

Learn how employee monitoring improves workplace safety by spotting risky work patterns early and building accountability through visibility.

Thasleem Shaik | May 08, 2026Understand how employee monitoring affects workplace morale, trust, and productivity, with practical ways to implement monitoring without reducing engagement.

Anjali | Apr 28, 2026Toxic productivity at the workplace harms employee well-being and leads to burnout. Discover what it is, signs to watch for, and strategies to overcome it.

Sai Keerthi Uppala | Jan 10, 2025Learn how workplace discipline shapes career growth, enhances job performance, and builds a culture of responsibility and excellence in the workplace.

Jahnavi Pulluri | Feb 23, 2024Learn how to improve efficiency in the workplace with 15 practical strategies to reduce wasted time, improve focus, and help your team work smarter and faster.

Jahnavi Pulluri | Apr 30, 2026Boost your workforce productivity with a data-driven approach of workplace analysis. Learn how the Time Champ software helps your company.

Mounika Sai | Jul 25, 2023